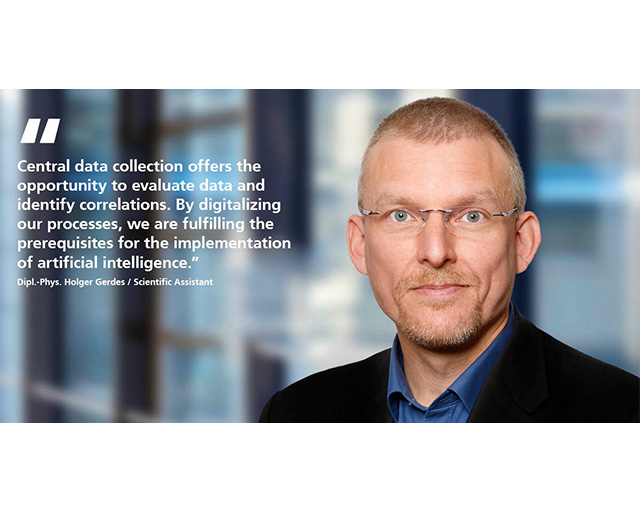

Implementation strategy for a data-mining project with CRISP-DM in surface technology

The CRISP-DM model (Cross Industry Standard Process for Data Mining) is a standard model for data mining that is widely used, cross-industry and publicly available.

This model was developed around 1996 by renowned companies and offers a very good possibility for the meaningful mapping and processing of data-mining projects. The model, which we also use for orientation in our digitalization projects at the Fraunhofer IST, is divided into six phases, whereby individual process phases can also be run through repeatedly. The individual phases are described briefly below, and are illustrated using projects at the Fraunhofer IST as examples.

Phase 1: Understanding of business

The first phase of CRISP-DM in surface technology is a fundamental requirement for the successful completion of the project. In this phase, the objectives and requirements of the data mining are defined. The objective should hereby be “smart”, i.e. “specific, measurable, accepted, realistic and timetabled”. This is essential if it is to be possible to determine, after completion of the project, whether the data mining has really been successful.

With its decades of experience and more than 200 employees, the Fraunhofer IST can look back on outstanding expertise in the field of coating and surface technology and has already implemented numerous digitalization projects. The focus has hereby been directed at a wide variety of issues. One example is the integration of commercially available environmental sensors on the basis of Message Queuing Telemetry Transport (MQTT) via Wi-Fi for the recording of room temperature, air humidity and air pressure, in order to automatically store the aforementioned parameters in databases and to automatically inform employees via E-Mail when threshold values are exceeded or not achieved.

Phase 2: Understanding of data

In the second phase of the project, the project objective is compared with the existing data sets. In this phase, a decision is to be made as to whether the data sets are sufficient for achieving the project objective with a good chance of success. If all necessary data are available, the next phase can begin. If the data situation is not sufficient, either the project objective must be redefined or the data must be subsequently entered or collected.

For this purpose, the Fraunhofer IST relies, amongst other things, on Open Platform Communications Unified Architecture (OPC-UA) servers for data provision. These servers have already been implemented in new plant acquisitions, and have also been successfully retrofitted in existing plants. They enable the automated recording and backup of process parameters.

The data is saved in central databases which also contain additional information, e.g. regarding the laboratory environmental conditions, temperature, humidity and air pressure. Furthermore, web pages are made available to employees at the Fraunhofer IST as an upload front end. These upload pages accept practically any document format and also store them in databases in prepared form.

Phase 3: Preparation of data

After completion of the first two phases, it must be ensured that the data are suitable for the project objective and can now be prepared for the subsequent process. The objective of the third phase is to provide modeling with a data set containing all necessary, correctly formatted values. In order to achieve this, the often different data sources must be merged, errors in the data sets suitably corrected and, if necessary, new variables developed.

For this purpose, the Fraunhofer IST has, for example, developed software tools which make it possible to automatically divide large microscope images into smaller units, to adjust them in terms of brightness, contrast and color space and, furthermore, to provide the file name with additional information. If required, data processing can be performed within databases. For this, new tables are created, which contain the agglomerated information.

Phase 4: Modeling

In the modeling phase, a suitable method for solving the problem is sought. The possible methods encompass the utilization of simple statistics, semi-empirical models or machine-learning algorithms right through to neural networks.

At the Fraunhofer IST, machine-learning algorithms are applied in cooperation with partners for the prediction of layer properties and process parameters, and neural networks are used in image recognition.

Phase 5: Evaluation

In the modeling phase, solely the model is tested. In the evaluation phase, the aim is to test the entire processing routine and to clarify whether the process from data acquisition through processing and modeling functions reliably. These so-called pipelines should also be robustly tolerant of errors such as the omission of data.

Phase 6: Provision

In the final phase, the project is integrated into the company processes. All digitalization projects developed at the Fraunhofer IST are prepared in Docker containers and can therefore also be ported very easily to other systems.